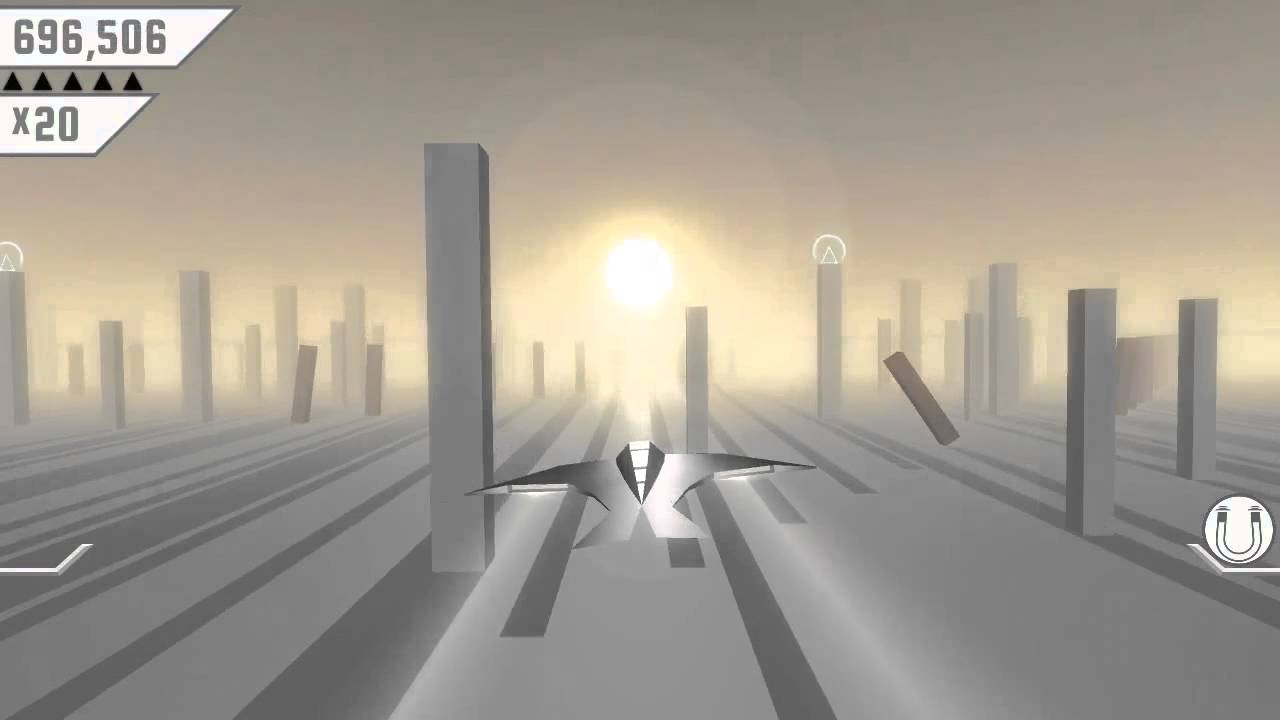

Machine Learning concepts can be used as powerful tools by game developers to create NPC behaviours, help balance game mechanics or automate quality assurance. In 2017, Unity released the ML-Agents Plugin to help developers integrate Machine Learning into their game. I gave it a try by making an AI that taught itself how to play a Flappy Bird kind of game using neural networks and reinforcement learning. It turned out pretty great so here's a summary of my implementation.

The Unity ML-Agents Plugin provides tools for several Machine Learning methods: reinforcement learning, imitation learning and neuroevolution. This time, I went with reinforcement learning. Birds (or learning agents) are subject to a training session where inputs (or observations) are processed through a neural network that will output the user action, which in this case, is to flap the bird's wing to jump up. Outputs are calculated by the neural network based on reward points given to the agent depending on if it did something good. The ML-Agents Plugin works with the Tensorflow Machine Learning Library to generate the neural network during the training session. In short, all we need to do is to feed the observations, manage the output actions and give reward points if the bird agent is in a good position or remove some if it dies by hitting an obstacle.

A visualization of the bird agent neural network. The hidden layer is generated and its goal is to transform the inputs into the outputs.

Observations (Inputs)

- Distance between bird and top or the screen. This distance is normalized between 0 and 1 by being divided by the distance between the floor and the ceiling.

- Distance between bird and floor, normalized just like the previous observation.

- Bird's RigidBody vertical velocity normalized between 0 and 1 by being divided by the bird's max Y speed.

- Y difference between the bird and the center position of the next obstacle's opening, normalized between -1 and 1.

Observations are normalized between -1 and 1 so that all the values inputed to the neural network are processed on the same scale.

Actions (Continuous Outputs)

- Flap wings action. A normalized value between 0 and 1 is outputed by the neural network. Bird only jumps if that value is greater than 1/2.

Reinforcement Learning Rewards

- The bird agent gains 0.1 reward point each decision interval if its current vertical velocity is heading toward the center of the next obstacle's opening.

- The bird agent loses 1 reward point and is reseted as soon as it hits any obstacles, the top of the screen or the floor.

Learning Process

The bird agent is set to take a decision every 5 frames, so it decides if it should jump or not at this decision frequency. Before the training session, we can set for how long we want the agents to train, measured in decision step count. If the observations, the actions and the rewards are well made, the longer it trains, the better the results will be. On the other hand, over training agents could make them seem mechanical in their action and less flexible to new situations. For this project, I tested different learning session durations. After a 50k decision steps training session, birds were averaging a score of 10 obstacles and often failed to overcome obstacles if the gap from the previous obstacle was tall. A 200k decision steps session made the birds a lot better but few were able to go further than 100 obstacles. Finally, after a 1 million decision steps training session, birds can go on for almost forever, only very rarely failing. Note that the training process will be a lot faster if you have multiple learning agents simultaneously learning on your scene. For this project, I trained with 15 bird agents at the same time.

Sources

Unity Plugin source and documentation - Unity ML-Agents Plugin

Environment and Tensorflow Setup

- Setting Up Tensorflow For Use With Unity

- Unity3D Machine Learning Setup for ML-Agents on Windows 10 with Tensorflow

Sprite Assets - Unity for 2D Live Session: Making A Flapping Bird Style Game